Purpose

Using this statement you can control the distribution of rows across the cluster server and within a server.

Prerequisite

-

You need either the system privilege

USE ANY SCHEMAor the object privilegeUSAGEon the target schema, or the schema must be owned by you or one of your assigned roles. - You need either the system privilege

ALTER ANY TABLEor the object privilegeALTERon the table or its schema, or the table must be owned by you or one of your assigned roles.

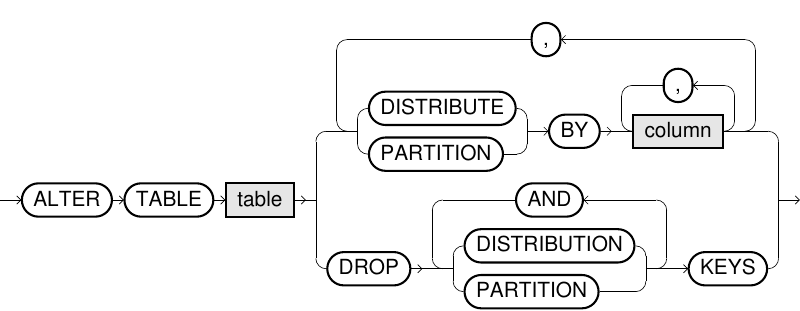

Syntax

alter_distribution/partitioning::=

Usage notes

- Setting proper distribution and partition keys can significantly improve performance of queries using these tables. See DISTRIBUTE BY and PARTITION BY for further details on proper usage.

- Altering the distribution or partition keys can take some time depending on the size of the table. After running the ALTER TABLE statement, the data blocks are re-distributed among nodes or re-arranged, which requires heavy network usage. Queries running at the same time as the ALTER TABLE statement may experience negative performance until the ALTER TABLE statement has finished.

- Setting or dropping the distribution or partitioning keys will cause all internal indexes on the given table to be rebuilt.

- If the table distribution/partitioning was not defined explicitly, the system distributes the rows equally across the cluster nodes.

- The explicit distribution/partitioning of a table can also be defined directly in the CREATE TABLE statement. This status is sustained when inserting, deleting or updating rows.

- Using the

DROP DISTRIBUTION/PARTITION KEYSstatement, you can undo the explicit distribution/partitioning of a table. After that, the rows will again be distributed randomly, but equally across the cluster nodes. - You can identify if a table has an explicit distribution or partition key by viewing the system table EXA_ALL_COLUMNS. The column

COLUMN_IS_DISTRIBUTION_KEYshows TRUE for all columns that are distribution keys. The columnCOLUMN_PARTITION_KEY_ORDINAL_POSITIONshows its ordinal position if it is included in the partition key.

DISTRIBUTE BY

- When you join two tables, and the distribution keys for both of the tables are a subset of the join columns, then the join can be computed locally on the server nodes without having to transfer any data across the cluster. For more details and an example, see Distribution keys.

- For joins to be converted to local joins, both of the columns in the join condition must be the same data type.

- Executing an aggregation using

GROUP BYon a single table will be accelerated if the distribution columns are a subset of the group by columns. - If a table is explicitly distributed, the distribution of the rows across the cluster nodes is determined by the hash value of the distribution keys. The order of the defined columns is irrelevant. The concrete mapping between column values and database nodes can be simulated with function VALUE2PROC.

- Distribution keys should not be set on columns which are often used in

WHEREclauses. Doing so may negatively impact performance. - If you define inappropriate distribution keys which would lead to an imbalance of rows in the cluster, the command is aborted, and a corresponding error message is thrown.

PARTITION BY

- Partitioning of a table can be useful if it is extremely large and does not fit in the main memory anymore. For more details and an example, see Partitioning.

- You only have to define the columns specifying the partitioning key. The actual partitions are automatically organized by the system.

- In case of a selective filter condition on the partitioning column of a very large table, less data has to be loaded from disk if it does not yet reside in the main memory cache.

- This is applicable to

DELETEoperations that can be processed more efficiently. - Partitioning keys may only contain fixed-sized sortable data types: DECIMAL, DATE, TIMESTAMP, DOUBLE, BOOLEAN, INTERVAL, HASHTYPE.

- The order of the defined columns is relevant.

Example

CREATE TABLE my_table (order_id INT,

shop_id INT,

order_item VARCHAR(100),

order_price DOUBLE,

order_date DATE);

ALTER TABLE my_table DISTRIBUTE BY shop_id, PARTITION BY order_date;

DESCRIBE my_table;Results

| COLUMN_NAME | SQL_TYPE | NULLABLE | DISTRIBUTION_KEY | PARTITION_KEY |

|---|---|---|---|---|

| PARTITION_KEY | DECIMAL(18,0) | TRUE | FALSE | FALSE |

| SHOP_ID | DECIMAL(18,0) | TRUE | TRUE | FALSE |

| ORDER_ITEM | VARCHAR(100) UTF-8 | TRUE | FALSE | FALSE |

| ORDER_PRICE | DOUBLE | TRUE | FALSE | FALSE |

| ORDER_DATE | DATE | TRUE | FALSE | TRUE |

ALTER TABLE my_table DROP DISTRIBUTION KEYS;